Duration

Description

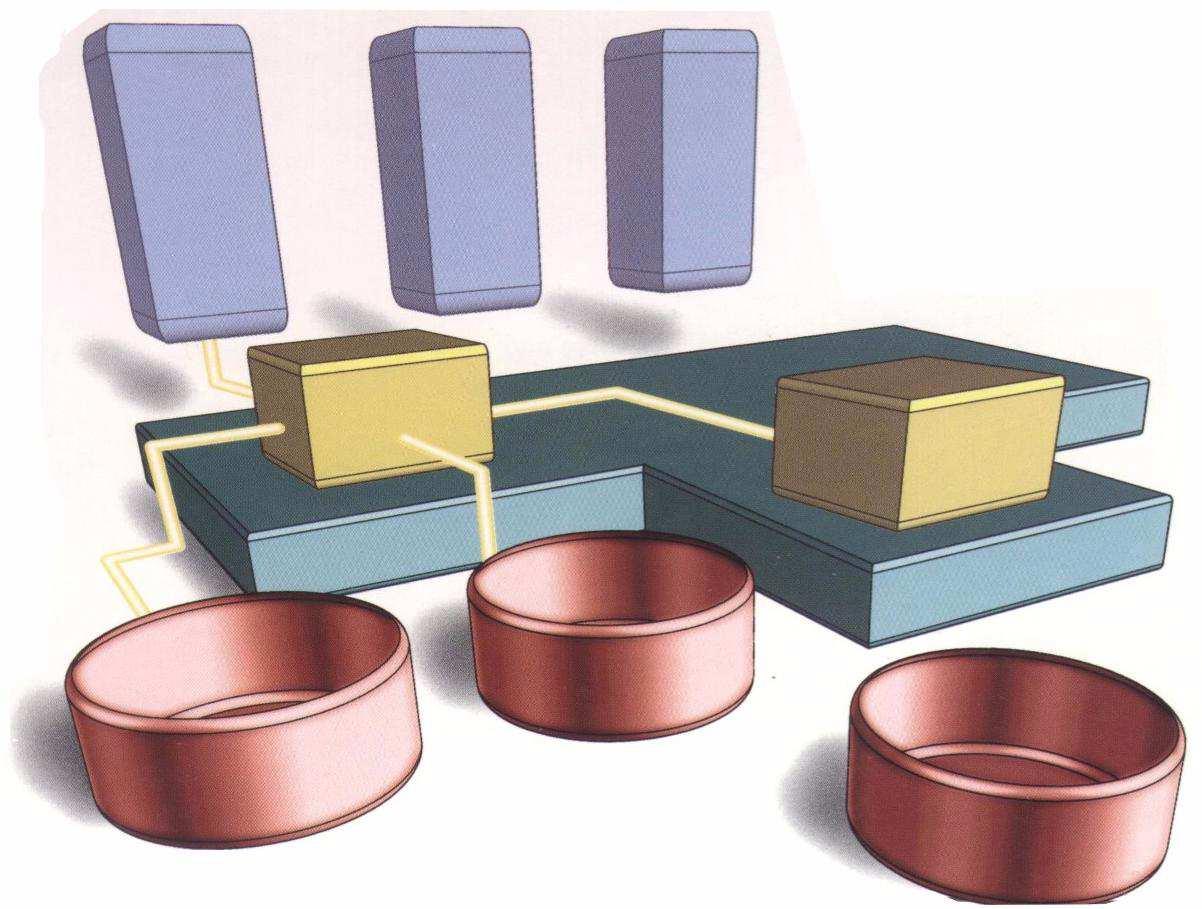

Processing highly connected data as graphs becomes increasingly essential in many domains. Prominent examples are social networks, e.g., Facebook and Twitter, as well as information networks like the World Wide Web or biological networks. One crucial similarity of these domain-specific data is their inherent graph structure, which makes them eligible for analytics using graph algorithms. Besides that, the datasets share two more similarities: they are huge in size, making it hard or even impossible to process them on a single machine and they grow over time, which classifies them as temporal graphs. Intending to analyze these large-scale, temporal datasets, we started developing a framework called “Gradoop” (Graph Analytics on Hadoop®) with the following three main objectives:

- developing a temporal graph data model incl. operators for the definition of analytical pipelines

- data integration of heterogeneous source systems into an integrated graph and

- efficient data distribution/replication to optimize the execution of distributed graph operators.

Our prototype is built on top of the distributed dataflow framework Apache Flink™. The data model has been designed, and the operators have been implemented. A first use case is the BIIIG project for graph analytics in business information networks. In our ongoing work, we will look into different methods of operator tuning depending on the underlying dataflow system.

Students

- Philip Fritzsche

- Timo Adameit

- Lucas Schons

Awards

Source Code

Talks

- GRADOOP - Scalable Graph Analytics with Apache Flink

- GRADOOP - Scalable Graph Analytics with Apache Flink

- Scalable Graph Analytics with GRADOOP and BIIIG

- Scalable Graph Analytics

- Skalierbare Graph-basierte Analyse und Business Intelligence

- (Cypher)-[:ON]->(ApacheFlink)<-[:USING]-(Gradoop)

- From Shopping Baskets to Structural Patterns

- Scalable Graph Data Analytics with GRADOOP

- Gut vernetzt: Skalierbares Graph Mining für Business Intelligence

- Scalable Graph Analytics with GRADOOP

- Distributed Graph Analytics with GRADOOP

Publikationen (31)

| Dateien | Cover | Beschreibung | Jahr |

|---|---|---|---|

|

Rost, C.

; Gomez, K.

; Täschner, M.

; Fritzsche, P.

; Schons, L.

; Christ, L.

; Adameit, T.

; Junghanns, M.

; Rahm, E.

VLDB Journal 2021 Special Issue Paper

|

2021 / 5 | |

|

Rost, C.

; Gomez, K.

; Fritzsche, P.

; Thor, A.

; Rahm, E.

24th International Conference on Extending Database Technology (EDBT)

|

2021 / 3 | |

|

Gomez, K.

; Täschner, M.

; Rostami, M.

; Rost, C.

; Rahm, E.

Proc. Datenbanksysteme für Business, Technologie und Web (BTW) 2021

|

2021 / 3 | |

|

2019 / 11 | ||

|

Gomez, K.

; Täschner, M.

; Rostami, M.

; Rost, C.

; Rahm, E.

Techn. Report, Univ. of Leipzig, arXiv:1910.04493, Oct 2019

|

2019 / 10 | |

|

Rost, C.

; Thor, A.

; Fritzsche, P.

; Gomez, K.

; Rahm, E.

Proc. of Intl. Workshop on Advances in managing and mining large evolving graphs (LEG@ECML-PKDD)

|

2019 / 9 | |

|

2019 / 3 | ||

|

2019 / 3 | ||

|

Rostami, M.

; Kricke, M.

; Peukert, E.

; Kühne, S.

; Wilke, M.

; Dienst, S.

; Rahm, E.

Datenbank-Spektrum

|

2019 / 3 | |

|

Petermann, A.

Dissertation, Univ. Leipzig

|

2019 |